Most of us spend most of our time working on immediate problems. Designing a new site, adding a feature to an app, revising a specification, etc. We all need to focus on these short-term problems but sometimes it is useful to step back and look at the larger context within which we are working.

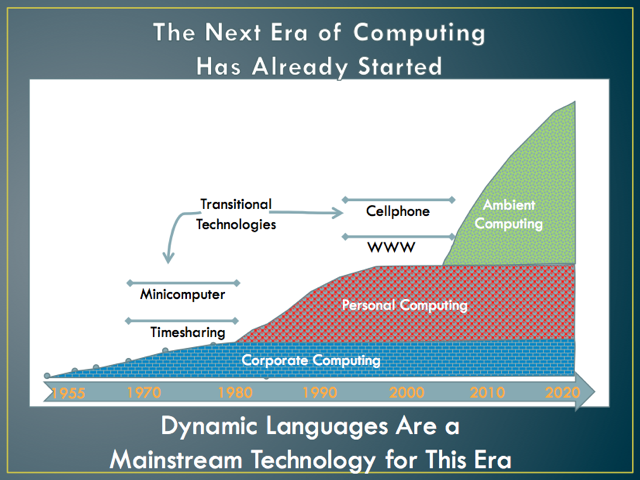

Last fall at the Dynamic Language Symposium I gave a talk that concluded with this slide:

It abstracts some key points of my perspective on the history and near future of computing. In this and some future posts I’ll be writing about some of those ideas. For readers with an analytic bent, don’t worry too much about what the y-axis represents. This is a conceptual timeline, not a graph of any actual data. The y-axis was intended to be something like overall impact of computing upon average individuals but can also be seen as an abstraction of other relevant factors such as economic impact.

The must important idea from the slide is that, in my view, there have been three major “eras” of computing (or, if you prefer, of the “information age”). Each of these eras represents a major difference in the role computers play in human life and society. The three eras also correspond to major shifts in the dominant form of computing devices and software. We are currently in the early days of the third era.

The first era was the Corporate Computing Era. It was focused on using computers to enhance and empower large organizations such as commercial enterprises and governments. Its applications were largely about collecting and processing large amounts of schematized data. Databases and transaction processing were key technologies.

During this era, if you “used a computer” it would have been in the context of such an organization. However, the concept of “using a computer” is anachronistic to that era. Very few individual had any direct contact with computing and for most of those that did, the contact was only via corporate information systems that supported some aspects of their jobs.

The Corporate Computing Era started with the earliest days of computing in the 1950’s and obviously corporate computing still is and will continue to be an important sector of computing. However, around 1980 the primary focus of computing started to rapidly shift away from corporate computing. This was the beginning of the Personal Computing Era.

The Personal Computing Era was about using computers to enhance and empower individuals. Its applications were largely task-centric and focused on enabling individuals to create, display, manipulate, and communicate relatively unstructured information. Software applications such as word processors, spreadsheets, graphic editors, and email were key technologies.

Today we seem to be in the early stages of a new era of computing. A change to the dominant from of computing is occurring that will be at least a dramatic as the transition from the Corporate Computing Era to the Personal Computing Era. This new era of computing is about using computers to augment the environment within which humans live and work. It will be an era of smart devices, perpetual connectivity, ubiquitous information access, and computer augmented human intelligence.

We don’t yet have a universally accepted name for this new era. Some people call it post-PC, pervasive, or ubiquitous computing. Others focus on specific technical aspects of the new era and call it cloud, mobile, or web computing. The term that I currently prefer and will use for now is “ambient computing.” In the Ambient Computing Era humans live in a rich environment of communicating computing devices and a ubiquitous cloud of computer mediated information. In the Ambient Computing Era there will still be corporate computing and task-oriented personal computing style applications will still be used. But the defining characteristic of this era will be the fact that computing is shaping the actual environment within which we live and work.

As I discussed in one of my first posts, a transitional period between eras is an exciting time to be involved in computing. We all have our immediate goals and the much of the excitement and opportunity is focused on shorter term objectives. But while we work to create the next great web application, browser feature, smart device, or commercially successful site or service we should occasionally step back and think about something bigger: What sort of ambient computing environment do we want to live within and is our current work helping or hindering its emergence?

Comments on this entry are closed.

I’d like to use that graphic for my teaching. Do you have it in high resolution?

Would I be allowed to use it?

As stated in this site’s footer: “Unless otherwise noted, all content of this site is licensed under a Creative Commons Attribution-NonCommercial 3.0 United States License”

Because of a fluke, I don’t have the original of the graphic. I screen grabbed this one from the PDF that I also link to in the post. You could do the same to get if you want a different resolution.

One development in computing that lies before us is a technology that elevates social networking from one-liners, personal gossip and self-promotion to means for people to effectively debate issues of the day. ‘Effective’ would need a structuring of debate such that it is immune to disruption. One would also want unfettered public ownership and access to trusted knowledge without inconvenient search engines. The latter in particular suggests that such ‘ambient computation’, or whatever name it eventually attracts, requires an Internet technology that supersedes the Web.

The very notion of an obsolete Web will be hard to promote and the legions of those dedicating their time and efforts to improving the Web may even wish for it to be just a fantasy. On the other hand, a rational look at the rate at which technologies have been made obsolete (think of iron core memories and DEC) provides fruit for thought.

Were such effective debate to ever take off, the Internet will render it global as well as national and local. To the extent that one can attempt to imagine how consensus building on a global scale would affect us politically and economically, this new era may well establish networked computation as a foundation for a new society.

Would cooperative ambient computation not fit nicely into Mozilla’s modus operandi if it accepts that the Web has no birth-right to eternal life?

While I think the browser and “web 1.0/2.0” are transitional technologies. I don’t think I would say that “the Web” is obsolete. Instead I might say that we are still in the early days of the emergence of the Ambient Web.

I think the ultimate shape (dominant technologies, business models, organizations, etc.) of the Ambient Computing Era is something that can’t be planned or orchestrated, it will be emergent. This was also true for the first two computing eras.

It’s important that we all work to shape this new era but it would be delusional to think that master plan is going to control what emerges.

Personally, I think “cooperative ambient computing” is the ultimate evolution of the “open web” and as such helping its emergence has a great fit with Mozilla’s mission. That’s why I’m talking about it…